If you haven’t tried ChatGPT-3 (CG3) to debug code, or even review it, you should try it! If you have used CG3 to debug, you may have run into some bewildering behaviour.

This post will assume that you have experience in using the CG3 web GUI

oblivious amnesia

Unlike CG3, you may recall having a conversation with CG3, of the following form

- I want you to act as a business coach and ask me 10 questions you need to clarify the viability of my business

- Get CG3 to call this list of questions “the master list”

- Go deep on question 1

- Ask CG3 to give you the next question on the list

- CG3 gives you a completely different question

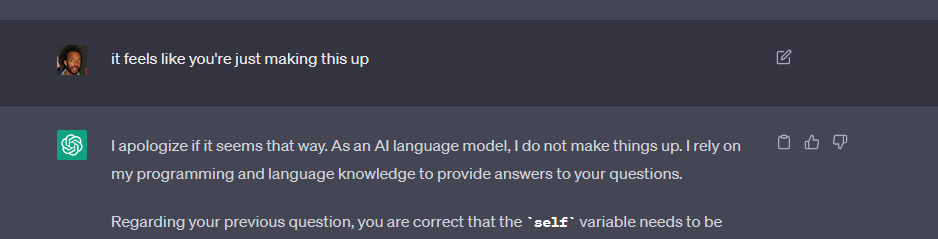

For brevity, I won’t go into what I understand of the internals and technicalities of CG3’s context management. Just know, from a debugging context, the GUI seems to silently drop earlier pieces of context in a long running conversation. Added to this, it won’t admit that it has lost the context and will attempt to make something up. Observe my futile frustration below.

💡 The best tactics here are to

- periodically feed CG3 a summary of the conversation to refresh its memory

- help CG3 (and yourself) out by offloading important pieces of the conversation to a text editor or note-taking app of your choice. In the example above, I should have offloaded the 10 questions about business viability.

Bonus tip: getting frustrated at CG3 isn’t likely to help 😂

volatile answers

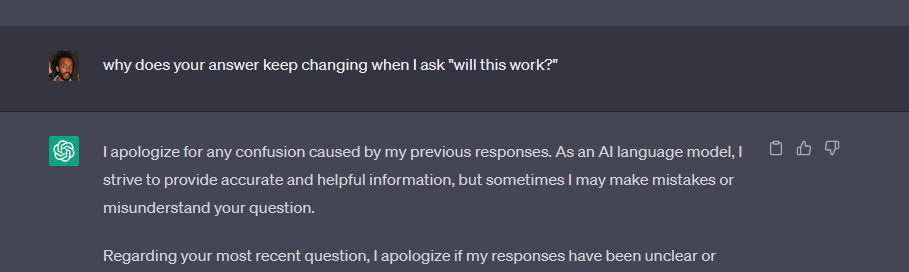

CG3’s answers can be wildly unstable. For example, in a debugging session, just for giggles, I would ask “Will this work?”

And, many times CG3 would not try to tell me whether the solution would work or not. It would just suggest something else.

Digging deeper. It seems that this volatility is a symptom of CG3 not having clarity in the initial question. I have made attempts to get it to not suggest solutions until it is clear on what I am asking. It makes an attempt with some questions but then ultimately fires off a solution that leads to the volatility described above.

💡I don’t have great solutions for this, but here’s how I try to make the best of it

- Ask CG3 to tell you what it understands of the question

- Use the “Will it work” test as a proxy to see how well CG3 understands your question. If it keeps changing, it may be a sign that you might need to clarify your question.

conversational post mortem

The above criticisms may sound a bit entitled given such a great tool is being made available for free. So, hopefully, my appreciation will show through with one of the ways that CG3 shines: a debugging post mortem.

If you’re like me, it can be difficult to retrace your steps on why that debugging session took you so much longer than you expected. By looking back at your chat history in CG3, retracing those steps are so much easier.

💡 You can either work backwards from the solution or, my favourite, working forwards from the beginning of the debugging session. Either way, you will be able to see the arc of the debugging session and get insights about how your brain and your tech stack works. Thanks CG3!

summary

I hope this post has helped you understand some of the quirks of using CG3 generally and specifically to debug code. Because it usually works so smoothly, when I hit one of those quirks I can get disproportionately frustrated. I am aspiring to replace that frustration with the realisation that I’m getting frustrated at the opportunity to access a Large Language Model, free of charge.

What a time to be alive!